hostname: login.marconi.cineca.it

early availability: 27/06/2016

start of production (Broadwell): 04/07/2016

start of production (Knights Landing): 04/01/2017

start of production (Skylake): 07/08/2017

Marconi is the new Tier-0 system, co-designed by Cineca and based on the Lenovo NeXtScale platform, that substitutes the former IBM BG/Q system (FERMI). MARCONI, based on the next-generation of the Intel® Xeon Phi™ product family alongside with Intel® Xeon® processor E5-2600 v4 product family, offers the scientific community a technologically advanced and energy-efficient high performance computing system.

This achievement represents the first step of the Italian infrastructure development plan put forward by the Cineca governing bodies, aimed at supporting scientific research. The global plan entails an investment of Euro 50 million in two phases. The first, just started, will make available a computational power of about 20Pflop/s and a data storage capacity of more than 20 petabytes, which will go into production, reaching completion in the second half of 2017. The second phase will start during 2019, with a final goal to increase available computing power to approximately 50 to 60 Pflop/s by 2020.

The new system, logically named ‘MARCONI’, will gradually be completed in about 12 months, between April 2016 and July 2017, according to a plan based on a series of updates:

- A1: a preliminary system going into production in July 2016, based on Intel® Xeon® processor E5-2600 v4 product family (Broadwell) with a computational power of 2Pflop/s.

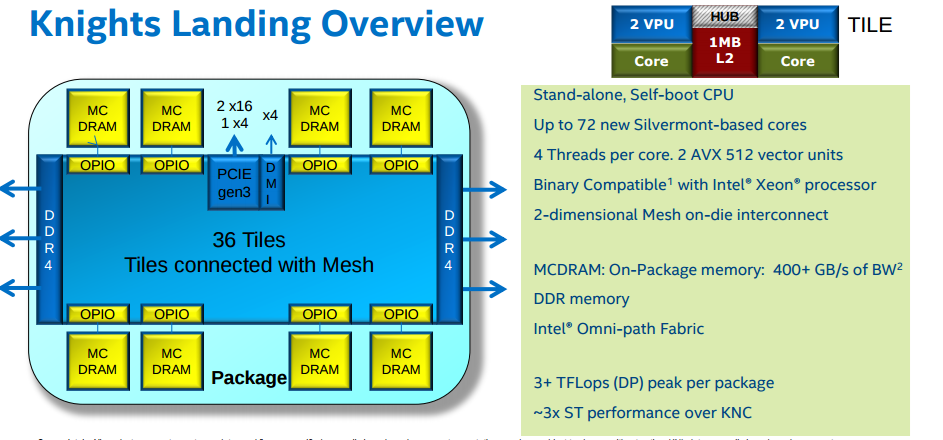

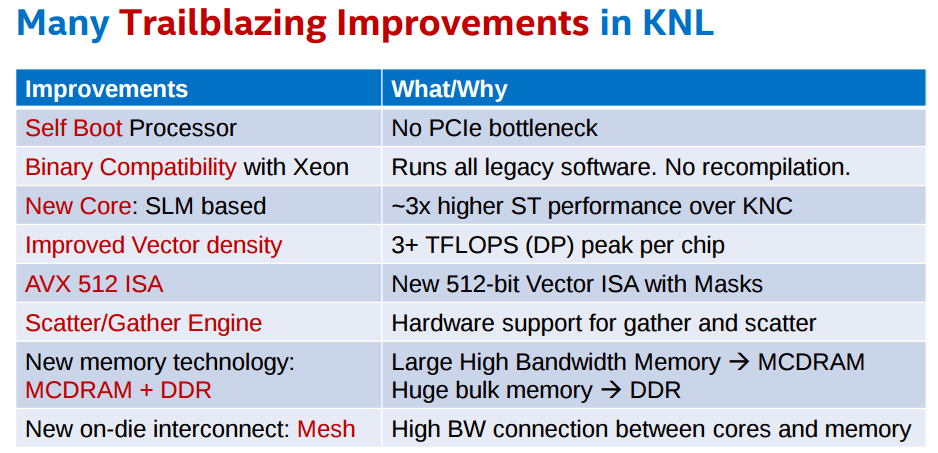

- A2: a new section has been added at the start of 2017, equipped with the next-generation of the Intel Xeon Phi product family (Knights Landing), based on a many-core architecture, enabling an overall configuration of about 250 thousand cores with expected additional computational power of approximately 11Pflop/s.

- A3: finally, in July 2017, this system is planned to reach a total computational power of about 20Pflop/s making use of the future generation Intel Xeon processors (Sky Lakes).

This supercomputer takes advantage of the new Intel® Omni-Path Architecture, which provides the high performance interconnectivity required to efficiently scale the system’s thousands of servers.

A high-performance Lenovo GSS storage subsystem, that integrates the IBM Spectrum Scale™ (GPFS) file system, is connected to the Intel Omni-Path Fabric and provides data storage capacity.

The progressive development of the Marconi system will allow use of state-of-the-art processor technology, enabling an extremely high-performance system but still with a ‘green’ soul. One of the parameters of the project developed by the Cineca team is in fact to gradually increase the computational power up to 50Pflop/s without exceeding, at any stage, the limit of 3MWatt power consumption (see here the full https://wiki.u-gov.it/confluence/display/SCAIUS/Marconi+press+release+-+English).

The "Marconi operation" is completed by: step one Marconi A1, step two Marconi A2 at the end will be the installation of Marconi A3.

Marconi A2 has 3600 server Intel AdamPass → 11 PFlops, Intel PHI code name Knight Landing (KNL) 68 cores @ 1.4GHz, Single socket node: 96 GByte DDR4 + 16GByte MCDRAM.

System Architecture

Architecture: Intel OmniPath Cluster

Internal Network: Intel OmniPath Architecture 2:1 Disk Space: 17PB (raw) of local storage

Disk Space: 17PB (raw) of local storage

Login nodes: 8 Login (3 available for regular users). Each one contains 2 x Intel Xeon Processor E5-2697 v4 with a clock of 2.30GHz and 128 GB of memory. Login nodes are shared between three partitions: A1 (BDW), A2 (KNL) and A3 (SKL). The three partitions are served by three different PBS servers that must be selected in order to address the required resource.

System A1 (Broadwell)

Model: Lenovo NeXtScale Racks: 21

|

|---|

System A2 (Knights Landing)

Model: Lenovo Adam Pass Racks: 50 Peak Performance: 11 PFlop/s

|

|---|

System A3 (Skylake)

Model: Lenovo Stark Racks: 21 |

|

|---|

Marconi Network

Network type: new Intel Omnipath, 100 Gb/s. MARCONI is the largest Omnipath cluster of the world.

Network topology: Fat-tree 2:1 oversubscription tapering at the level of the core switches only.

Core Switches: 5 x OPA Core Switch "Sawtooth Forest", 768 ports each.

Edge Switch: 216 OPA Edge Switch "Eldorado Forest", 48 ports each.

Maximum system configuration: 5(opa) x 768 (ports) x 2 (tapering) → 7680 servers.

KNL is the evolution of Knights Corner (KNC), part of the Xeon Phi family and currently available on CINECA's system GALILEO. The main differences between KNC and KNL are a better power in performance, a faster internal network, and the fact that KNLs are trated as standalone processors, unlike KNCs which are treated as accelerators. Applications compiled for KNL are also binary compatible with regular computing nodes.

KNL supports Intel AVX-512 instruction set extensions. The same three login nodes serve the Marconi-Broadwell (Marconi-A1) and the Marconi-KNL (Marconi-A2) partitions and queueing systems. Also the storage devices are in common between the two partitions.

This supercomputer is available to European researchers as a Tier-0 system of the PRACE (www.prace-project.eu) infrastructure, as well as to Italian public and industrial researchers.

Part of this system (MARCONI_Fusion) is reserved for the activity of EUROfusion (https://www.euro-fusion.org/). Details on the MARCONI_Fusion environment are reported in a dedicated document.

Access

All the login nodes have an identical environment and can be reached with SSH (Secure Shell) protocol using the "collective" hostname:

> login.marconi.cineca.it

which establishes a connection to one of the available login nodes.

For informations about data transfer from other computers please follow the instructions and caveats on the dedicated section Data storage, or the document Data Management.

Accounting

For accounting information please consult our dedicated section.

The account_no (or project) is important for batch executions. You need to indicate an account_no to be accounted for in the scheduler, using the flag "-A"

#PBS -A <account_no>

Please remember that different project are usually active of different hosts. With the "saldo -b" command you can list all the accoun_no associated to your username. On systems like MARCONI, where different partitions are available on the same environment, you need to specify the host name in the "saldo" command:

> saldo -b (reports projects defined on Marconi-BDW - default)

> saldo -b --knl (reports projects defined on Marconi-KNL)

Budget Linearization policy

On MARCONI a linearization policy for the usage of project budgets has been defined and implemented. For each account, a monthly quota is defined as:

monthTotal = (total_budget / total_no_of_months)

Starting from the first day of each month, the collaborators of any account are allowed to use the quota at full priority. As long as the budget is consumed, the jobs submitted from the account will gradually lose priority, until the monthly budget (monthTotal) is fully consumed. At that moment, their jobs will still be considered for execution, but with a lower priority than the jobs from accounts that still have some monthly quota left.

This policy is similar to those already applied by other important HPC centers in Europe and worldwide. The goal is to improve the response time, giving users the opportunity of using the cpu hours assigned to their project in relation of their actual size (total amount of core-hours).

Disks and Filesystems

The storage organization conforms to the CINECA infrastructure (see Section Data Storage and Filesystems).

In addition to the home directory $HOME, for each user is defined a scratch area $CINECA_SCRATCH, a large disk for the storage of run time data and files.

A $WORK area is defined for each active project on the system, reserved for all the collaborators of the project. This is a safe storage area to keep run time data for the whole life of the project.

| Total Dimension (TB) | Quota (GB) | Notes | |

|---|---|---|---|

| $HOME | 200 | 50 |

|

| $CINECA_SCRATCH | 2.500 | no quota |

|

| $WORK | 7.100 | 1.000 |

|

$DRES points to the shared repository where Data RESources are maintained. This is a data archive area availble only on-request, shared with all CINECA HPC systems and among different projects. $DRES is not mounted on the compute nodes. This means that you cannot access it within a batch job: all data needed during the batch execution has to be moved on $WORK or $CINECA_SCRATCH before the run starts.

Since all the filesystems are based on IBM Spectrum Scale™ file system (formerly GPFS), the usual unix command "quota" is not working. Use the local command cindata to query for disk usage and quota ("cindata -h" for help):

> cindata

Modules environment

As usual, the software modules are collected in different profiles and organized by functional category (compilers, libraries, tools, applications,..).

On Marconi a new feature has been added to the module environment: the profiles are of two types, “domain” type (chem, phys, lifesc,..) for the production activity and “programming” type (base and advanced) for compilation, debugging and profiling activities and that they can be loaded together.

Applications available on MARCONI are compiled for being executable on both A1 and A2 partitions. At present we did not recompile all softwares to have a KNL optimized version, but the binaries built for the Broadwell nodes can run on the KNL as well. We will inform you via newsletter when the optimized version of all softwares will be available.

"Base" profile is the default. It is automatically loaded after login and it contains basic modules for the programming activities (intel e gnu compilers, math libraries, profiling and debugging tools,..).

If you want to use a module placed under others profiles, for example an application module, you will have to load preventively the corresponding profile:

>module load profile/<profile name>

>module load autoload <module name>

For listing all profiles you have loaded use the following command:

>module list

In order to detect all profiles, categories and modules available on Marconi the command “modmap” is available:

>modmap

Production environment

Since MARCONI is a general purpose system and it is used by several users at the same time, long production jobs must be submitted using a queuing system. This guarantees that the access to the resources is as fair as possible.

Roughly speaking, there are two different modes to use an HPC system: Interactive and Batch. For a general discussion see the section Production Environment and Tools.

Interactive

A serial program can be executed in the standard UNIX way:

> ./program

This is allowed only for very short runs, since the interactive environment has a 10 minutes time limit: for longer runs please use the "batch" mode.

A parallel program can be executed interactively only within an "Interactive" SLURM batch job, using the "srun" command: the job is queued and scheduled as any other job, but when executed, the standard input, output, and error streams are connected to the terminal session from which srun was launched.

For example, to start an interactive session with the MPI program myprogram, using one node, two processors, launch the command:

> srun -N1 -n2 --ntasks-per-node=2 -A <account_name> --pty /bin/bash

SLURM will then schedule your job to start, and your shell will be unresponsive until free resources are allocated for you.

When the shell come back with the prompt, you can execute your program by typing:

> mpirun ./myprogram

SLURM automatically exports the environment variables you defined in the source shell, so that if you need to run your program myprogram in a controlled environment (i.e. specific library paths or options), you can prepare the environment in the origin shell being sure to find it in the interactive shell SLURruns for you on allocated resources.

Batch

The info reported here refer to the general MARCONI partition. The production environment of MARCONI_Fusion is discussed in a separate document.

As usual on systems using SLURM, you can submit a script script.x using the command:

> sbatch script.x

You can get a list of defined partitions with the command:

> sinfo -a

For more information and examples of job scripts, see section Batch Scheduler PBS.

Submitting serial Batch jobs

The serial partition is available with one core and a maximum walltime of 4 hours. It runs on the login nodes and it is designed for pre/post-processing serial analysis, and for moving your data (via rsync, scp etc.) in case more than 10 minutes are required to complete the data transfer. In order to use this partition you have to specify the SLURM flag "-P":

#SBATCH -p serial

Submitting Batch jobs for A1 partition

On MARCONI it is possible to submit jobs of different types, using a "routing" partition: just declare how many resources you need and your job will be directed into the right production partition (bdwdebug, bdwprod or bdwbigprod) with a correct priority. Furthermore, there are two additional partitions not managed by the default routing, devoted to specific categories of jobs: the serial partition and the special partition.

The maximum number of cores that you can request is 6000 (about 167 nodes) with a maximum walltime of 24 hours:

- If you do not specify the walltime (by means of the #SBATCH --time directive), a default value of 30 minutes will be assumed.

- If you do not specify the number of cores (by means of the "SBATCH -n" directive) a default value of 36 will be assumed.

- If you do not specify the amount of memory (as the value of the "SBATCH --mem" DIRECTIVE), a default value of 3GB will be assumed.

- Even though you can ask up to 123GB, we strongly suggest to limit the amount of the requested memory per node to 118 GB to avoid memory swapping to disk with serious performance degradation.

The special partition is designed for not-ordinary types of jobs, and users need to be enabled in order to use it. Please write to superc@cineca.it in case you think you need to use it.

Submitting Batch jobs for A2 partition

Marconi-A2 production environment is based on the latest release of PBS scheduler/resource manager.

After the first month of testing/pre-production phase, we introduced a simplified and more robust way to manage the job submission to the KNL (A2) partition. Our first configuration relied on the use of routing queues defined on the A1 PBS server, which however suffers of two major drawbacks concerning chained and interactive jobs.

The new configuration can take advantage of the definition of a PBS variable, which is automatically set when loading the "env-knl" module, available in the "base" profile. The loading of the module also modifies the (user-defined or default) prompt on the login nodes by putting the (KNL) string in front of it:

[username@r000u07l02 ~]$ module load env-knl

(KNL) [username@r000u07l02 ~]$

to remind users that they are using the production environment serving the A2 partition. In fact, once loaded the module, the PBS environment will be set accordingly to the PBS server defined on the A2 partition, and the jobs will be directly submitted to the A2 queues instead of passing through the routing queues defined on the A1 partition. With respect to the previous configuration. submission process results simplified. You do not more need to load the specific "env-knl" module to submit jobs on partitions based on Knights Landing processors. Instad, you simply need to specify the correct partition using the "SBATCH -p directive, and choosing from the following list:

knldebug

knlprod

knlbigprod

knlspecial

phase, you simply need to specify the correct queue in the #PBS -q <queue> directive, in case of special queues, or no queue for the default one (replacing the A1 routing queues "knl" and "knltest"). The loading of this environment directly exposes the login nodes to the KNL production partition; for instance, you will only see the queues defined on the KNL nodes with the "qstat -q" command, and the usual command "qsub", "qstat", "qdel" will act on the A2 PBS queues. Please note that differently from the A1 partition (where it suffices the job number to identify a job), and analogously to A3, on A2 the full job id is required (i.e. <job_number>.<PBS A2 server>, for instance 382113.r064u06s01).

Like in the A1 production environment, the A2-queues knldebug and knlprod, are not directly accessible and are served by the default routing queue "knlroute". This queue does not need to be requested, it being the default queue on the A2 PBS environment, and it will route the jobs either to the knldebug or to the knlprod (depending on the number of requested nodes and the walltime). The knlroute queue accepts jobs for the "shared" A2 partition, while a specific (dedicated) queue, xfuaknlprod, needs to be used for the MARCONI-Fusion KNL nodes.

Each KNL node exposes itself to SLURM has having 68 cores (corresponding to the physical cores of the KNL processor). Jobs should request the entire node (hence, #SBATCH -n 68), and the KNL PBS server is configured so that to assign the KNL nodes in an exclusive way (even if less ncpus are asked). Hyper-threading is enabled, hence you can run up to 272 processes/threads on each assigned node.

The preliminar configuration of the Marconi-A2 partition allowed to explore different HBM modes (on-package high-bandwidth memory based on the multi-channel dynamic random access memory (MCDRAM) technology) and clustering modes of cache operations. Please refer to the official Intel documentation for a description of the different modes. Following the suggestions of the Intel experts, we finally adopted one configuration only for all the KNL racks serving the knldebug and knlprod (academic) queues, namely:

- cache/quadrant

The queues serving the Marconi FUSION partition allow instead the use of nodes in flat/quadrant or cache/quadrant modes, please refer to the dedicated document.

The additional queue knltest (which needs to be explicitly requested via the "#PBS -q knltest" directive ) is defined on racks which have been configured in the following modes (one rack, i.e. 72 KNL nodes, for each configurations):

- cache/quadrant

- flat/quadrant

The knltest queue has a restricted access and it is used to tune and optimize other configuration parameters and system tips. At the moment it is dedicated to internal tests, please contact superc@cineca.it for additional information.

Please note that the "mode" configuration is susceptible to changes, we will update you within the shortest delay at each configuration change.

Two "custom resources" have been defined at the chunk-level (mcdram and numa) to request nodes in a specific configuration. The resource mcdram can assume the value "flat" or "cache" (valid for Marconi FUSION queues and the knltest), the numa resource can be only quadrant for all queues. The default values are mcdram=cache and numa=quadrant, hence for ordinary jobs there is no need to specify them.

The maximum memory which can be requested is 90 GB for cache nodes and 105 GB for flat nodes. However, to avoid memory swapping to disk with the associated performance degradation we strongly suggest to use up to 86 GB for cache nodes and 101GB for flat nodes.

For example, to request a single KNL node in a production queue the following PBS job script can be used:

#!/bin/bash

#PBS -l select=1:ncpus=68:mpiprocs=68:mem=86GB:mcdram=cache:numa=quadrant

#PBS -l walltime=0:30:00

#PBS -A <account_no>

... # Other PBS resource requests

PATH_TO_EXECUTABLE > output_file

#PBS -l select=1:ncpus=68:mpiprocs=68:mem=86GB

This will enqueue the job on the knldebug queue. In the present configuration, all jobs requesting up to two KNL nodes and less than 30 minutes will be queued on the knldebug (defined on a pool of reserved nodes). All other jobs will end up in the knlprod queue.

As already mentioned, if the "env-knl" module is loaded, the command "qstat" will list all the jobs submitted on the A2 partition. Analogously, to have the list of jobs submitted by a specific user on the KNL nodes the usual flag "-u" will provide the information:

(KNL) [username@r000u07l02 ~]$ qstat -u $USER -w

The "-w" (wide) option is important because it provides a wider and more complete visualization of the status of your job. The importancy is due to the fact that, with regular qstat, the jobid might appear truncated and therefore unusable when using the two commands below. "qstat -w" guarantees a full and useful display of the jobid, among with other details about your job.

To obtain a full display job status, given a job_id reported by the qstat command, you can use the "-f" flag

(KNL) [username@r000u07l02 ~]$ qstat -f <job_id>

and to delete a job, you need to type:

(KNL) [username@r000u07l02 ~]$ qdel <job_id>

Remember that, for the previous two commands to properly work, the full id name is required as reported by the command "qstat -u $USER -w) (e.g., 382113.r064u06s01).

By unloading the env-knl module you will restore the default PBS configuration (and the default prompt); all PBS commands will hence refer to the server installed on the Broadwell (A1) partition.

The loading of the module is the recommended option for submitting jobs to the KNL partition, but an alternative method is provided for those users who frequently use both partitions (even though we suggest to start two separate shells to deal with the two production environments). When logging to Marconi's login nodes, the default PBS environment refers to the Broadwell partition (hence, all the PBS commands will refer to queues and jobs submitted on the Brodwell nodes). If you don't load the env-knl module, you can always query the A2 PBS server (and submit jobs to the A2 nodes) by explicitly providing the server to be queried. Two aliases have been defined to identify the primary and secondary A2 PBS servers, namely knl1 and knl2. Hence, with the command:

[username@r000u07l02 ~] qstat -q

you will see the list of A1 queues, while the command:

[username@r000u07l02 ~] qstat -q @knl1

or

[username@r000u07l02 ~] qstat -q @knl2

will report the list of queues defined on the A2 partition. The PBS service is offered in the High-Availability mode, hence it relies on a primary (knl1) and a secondary (knl2) server. Depending on which one is the active one, the first or second command needs to be issued. If you load the env-knl module, the configuration file will automatically allow to select the active server, while if you want to explicitly query the A2 PBS servers you query the primary (knl1) server first and, if the connection is closed, query the secondary one (knl2).The same applies for all the other uses of the "qstat" command, for example: to see all the jobs submitted by a user the command:

[username@r000u07l02 ~] qstat -u $USER

will provide the list of jobs submitted to the Broadwell partition, while the command:

[username@r000u07l02 ~] qstat -u $USER @knl1

will provide the list of jobs submitted to the KNL partition.

Analogously, to submit a job to the A1 partition the standard command:

[username@r000u07l02 ~] qsub <submission_script>

will assign the job to Broadwell nodes, while the command:

[username@r000u07l02 ~] qsub -q knlroute@knl1 <submission_script>

will direct the job to the KNL partition (the submission_script must obviously correctly refer to the different resources available on Broadwell and KNL nodes). If the secondary PBS server is up, all the above commands will need to refer to the @knl2 server.

To delete an A2 job from A1:

[username@r000u07l02 ~] qdel <jobid>@knl1

Be sure that there are no spaces between the jobid and "@knl1". Also make sure that you use the full jobid that is returned from "qstat -u $USER -w", because without the -w option the jobid might be truncated.

Finally, the symmetric module env-bdw is also available in the "base" profile to load the production environment defined on the Broadwell partition (and change the prompt accordingly, by putting (BDW) in front of the user-defined or default prompt). The loading of this module (a part the change of the prompt) is completely equivalent to unload the "env-knl" module. Hence, if you loaded the env-knl module and you want to go back to the A1 production environment, you can either load the env-bdw module (which will also result in the change of the prompt) or unload the env-knl module (which will restore the original user-defined or default prompt).

Submitting Batch jobs for A3 partition

In this preliminary phase the A3 partition is ecxlusive for Eurofusion users. In the second phase will be opened to scientific community.

The new configuration can take advantage of the definition of a PBS variable, which is automatically set when loading the "env-skl" module, available in the "base" profile. The loading of the module also modifies the (user-defined or default) prompt on the login nodes by putting the (SKL) string in front of it:

[username@r000u07l02 ~]$ module load env-skl

(SKL) [username@r000u07l02 ~]$

to remind users that they are using the production environment serving the A3 partition. In fact, once loaded the module, the PBS environment will be set accordingly to the PBS server defined on the A3 partition, and the jobs will be directly submitted to the A3 queues instead of passing through the routing queues defined on the A1 partition. In the first period the A3 partition The loading of this environment directly exposes the login nodes to the SKL production partition; for instance, you will only see the queues defined on the SKL nodes with the "qstat -q" command, and the usual command "qsub", "qstat", "qdel" will act on the A3 PBS queue. In the second period the A3 partition will be opened to a scientific wider community usage and more queues will be added.

Each SKL node exposes itself to PBS has having 48 cores (corresponding to the 48 physical cores of the SKL processor). Jobs should request the entire node (hence, ncpus=48), and the SKL PBS server is configured so that to assign the SKL nodes in an exclusive way (even if less ncpus are asked) .

The maximum memory which can be requested is 180 GB and this value guarantees that no memory swapping will occur.

For example, to request a single SKL node in a production queue the following PBS job script can be used:

#!/bin/bash

#PBS -l select=1:ncpus=48:mpiprocs=48:mem=180GB

#PBS -l walltime=0:30:00

#PBS -A <account_no>

#PBS -q

... # Other PBS resource requests

PATH_TO_EXECUTABLE > output_file

This will enqueue the job on the queue.

As already mentioned, if the "env-skl" module is loaded, the command "qstat" will list all the jobs submitted on the A3 partition. Analogously, to have the list of jobs submitted by a specific user on the SKL nodes the usual flag "-u" will provide the information:

(SKL) [username@r000u07l02 ~]$ qstat -u $USER -w

The complete list of the status of user's job is visualized with the full jobid. To obtain a full display job status, given a job_id reported by the qstat command, you can use the "-f" flag

(SKL) [username@r000u07l02 ~]$ qstat -f <job_id>

and to delete a job, you need to type:

(SKL) [username@r000u07l02 ~]$ qdel <job_id>

For the previous commands to properly work, please note that differently from the A1 partition (where it suffices the job number to identify a job), and analogously to A2, on A3 the full job id is required as reported by the command "qstat -u $USER -w" (i.e. <job_number>.<PBS A3 server>, for instance 37239.r000u26s04).

By unloading the env-skl module you will restore the default PBS configuration (and the default prompt); all PBS commands will hence refer to the server installed on the Broadwell (A1) partition.

The loading of the module is the recommended option for submitting jobs to the KNL partition, but an alternative method is provided for those users who frequently use more partitions (even though we suggest to start two separate shells to deal with the two production environments). When logging to Marconi's login nodes, the default PBS environment refers to the Broadwell partition (hence, all the PBS commands will refer to queues and jobs submitted on the Brodwell nodes). If you don't load the env-skl module, you can always query the A3 PBS server (and submit jobs to the A3 nodes) by explicitly providing the server to be queried. Two aliases have been defined to identify the A3 PBS servers: the primary server skl1 and the secondary server skl2. Hence, with the command:

username@r000u07l02 ~] qstat -q

you will see the list of A1 queues, while the command:

[username@r000u07l02 ~] qstat -q @skl1

or

[username@r000u07l02 ~] qstat -q @skl2

will report the list of queues defined on the A3 partition.

If you load the env-skl module, the configuration file will automatically allow to select the active server, while if you want to explicitly query the A3 PBS servers you query the primary (skl1) server first and, if the connection is closed, query the secondary one (skl2). The same applies for all the other uses of the "qstat" command, for example: to see all the jobs submitted by a user the command:

[username@r000u07l02 ~] qstat -u $USER

will provide the list of jobs submitted to the Broadwell partition, while the command:

[username@r000u07l02 ~] qstat -u $USER @skl1

will provide the list of jobs submitted to the SKL partition.

To delete an A3 job from A1 or A2 partition:

[username@r000u07l02 ~] qdel <jobid>@skl1

Be sure that there are no spaces between the jobid and "@skl1". Also make sure that you use the full jobid that is returned from "qstat -u $USER -w", because without the -w option the jobid might be truncated.

Finally, you can change the partition (from A3 partition to A1 or A2 partition) loading env-bdw or env-knl module, which will also result in the change of the prompt by putting (BDW) or (KNL) in front of the user-defined or default prompt. Otherwise you can unload the env-skl module and restore in this way the original prompt.

Summary

In the following table you can find all the main features and limits imposed on the queues of the shared A1 and A2 partitions. For Marconi-FUSION dedicated queues please refer to the dedicated document.

| Queue name | Partition | # cores per job | max walltime | max running jobs per user/ max n. of cpus per user | max memory per job | priority | HBM/clustering mode | notes | |

| A1 | debug | min = 1 max = 144 | 02:00:00 | 4/144 | 123 GB/node value suggested: 118 GB/node | 40 | managed by route runs on 24 nodes shared with the visualrcm queue | ||

| route | A1 | prod | min = 1 max = 2304 | 24:00:00 | 20/2304 | 123 GB/node value suggested: 118 GB/node | 50 | managed by route | |

| A1 | bigprod | min = 2305 max = 6000 | 24:00:00 | 1/6000 | 123 GB/node value suggested: 118 GB/node | 60 | managed by route | ||

| special | A1 | special | min = 1 max = 36 | 180:00:00 | 123 GB/node value suggested: 118 GB/node | 100 | ask superc@cineca.it #PBS -q special | ||

| serial | A1 | serial | 1 | 04:00:00 | max 12 jobs on this queue max 4 jobs per user | 1 GB | 30 | #PBS -q serial | |

| visualrcm | A1 | visualrcm | min = 1 max = 144 | 03:00:00 | 1/144 | 123 GB/node value suggested: 118 GB/node | 40 | runs on 24 nodes shared with the debug queue | |

| knlroute | A2 | knldebug | min = 1 max = 136 (2 nodes) | 00:30:00 | 5/340 | 90 GB/node (mcdram=cache) value suggested: 86 GB/node | 40 | mcdram=cache numa=quadrant | managed by knlroute runs on 144 dedicated nodes |

| A2 | knlprod | min >136 max = 68000 (1000 nodes) | 24:00:00 | 20/68000 | 90 GB/node (mcdram=cache) value suggested: 86 GB/node | 50 | mcdram=cache numa=quadrant | managed by knlroute

| |

| knltest | A2 | knltest | min =1 max = 952 (14 nodes) | 24:00:00 | - | 90 GB/node (mcdram=cache) value suggested: 86 GB/node 105 GB/node (mcdram=flat) value suggested: 101 GB/node | 30 | mcdram=<cache/flat> numa=quadrant | ask superc@cineca.it #PBS -q knltest #PBS -W group_list=<account_no> |

Marconi-A3 is restricted to the Eurofusion community, no queues are available for the general production.

PLEASE NOTE: the PBS characteristics have not completely defined for MARCONI, some changes will be possible.

Graphic session

If a graphic session is desired we recommend to use the tool RCM (Remote Connection Manager). For additional informations visit Remote Visualisation section on our User Guide.

The service will become available in the next weeks.

Programming environment

The programming environment of the MARCONI machine consists of a choice of compilers for the main scientific languages (Fortran, C and C++), debuggers to help users in finding bugs and errors in the codes, profilers to help in code optimisation.

In general you must "load" the correct environment also for using programming tools like compilers, since "native" compilers are not available.

If you use a given set of compilers and libraries to create your executable, very probably you have to define the same "environment" when you want to run it. This is because, since by default linking is dynamic on Linux systems, at runtime the application will need the compiler shared libraries as well as other proprietary libraries. This means that you have to specify "module load" for compilers and libraries, both at compile time and at run time. To minimize the number of needed modules at runtime, use static linking to compile the applications.

Compilers

You can check the complete list of available compilers on MARCONI with the command:

> module available

and checking the "compilers" section. In general, the available compilers are:

INTEL (ifort, icc, icpc) :

> module load intel

GNU (gcc, g77, g95):

> module load gnu

PGI - Portland Group (pgf77,pgf90,pgf95,pghpf, pgcc, pgCC):

> module load profile/advanced

> module load pgi

After loading the appropriate module, use the "man" command to get the complete list of the flags supported by the compiler, for example:

> module load intel

> man ifort

There are some flags that are common for all these compilers. Others are more specifics. The most common are reported later for each compiler.

- If you want to use a specific library or a particular include file, you have to give their paths, using the following options

-I/path_include_files specify the path of the include files

-L/path_lib_files -l<xxx> specify a library lib<xxx>.a in /path_lib_files

2. If yout want to debug your code you have to turn off optimisation and turn on run time checkings: these flags are described in the following section.

If you want to compile your code for normal production you have to turn on optimisation by choosing a higher optimisation level:

-O2 or -O3 Higher optimisation levels

Other flags are available for specific compilers and are reported later.

INTEL Compilers

Initialize the environment with the module command:

> module load intel

The names of the Intel compilers are:

- ifort: Fortran77 and Fortran90 compiler

- icc: C compiler

- icpc: C++ compiler

The documentation can be obtained with the man command after loading the relevant module:

> man ifort > man icc

Some miscellanous flags are described in the following:

-extend_source Extend over the 77 column F77's limit -free / -fixed Free/Fixed form for Fortran -ip Enables interprocedural optimization for single-file compilation -ipo Enables interprocedural optimization between files - whole program optimisation

Compiling for KNL

Since KNL nodes are binary compatible with legacy x86 instruction set, any code compiled for normal A1 Marconi nodes will run on these nodes. However, specific compiler option is needed to generate AVX-512 instructions to derive better performance from these nodes.

Version 15.0 and newer of the Intel compilers can generate these instructions if you specify the -xMIC-AVX512 flag (which generates specific AVX512 instructions, hence the binary will not work on the Broadwell partition) or the -axMIC-AVX512 flag (which generates optimized executables for both AVX2 and AVX512 ISA):

module load intel

icc -axMIC-AVX512 -O3 -o executable source.c

icpc -axMIC-AVX512 -O3 -o executable source.cc

ifort -axMIC-AVX512 -O3 -o executable source.f

There are certain considerations to be taken into account before running legacy codes on KNL nodes. Primarily, the effective use of vector instructions is critical to achieve good performance on KNL cores. For guideline on how to get vectorization information and improve code vectorization, refer to

How to Improve Code Vectorization

PORTLAND Group (PGI)

Initialize the environment with the module command:

> module load profile/advanced > module load pgi

The name of the PGI compilers are:

- pgf77: Fortran77 compiler

- pgf90: Fortran90 compiler

- pgf95: Fortran95 compiler

- pghpf: High Performance Fortran compiler

- pgcc: C compiler

- pgCC: C++ compiler

The documentation can be obtained with the man command after loading the relevant module:

> man pgf95 > man pgcc

Some miscellanous flags are described in the following:

-Mextend To extend over the 77 column F77's limit -Mfree / -Mfixed Free/Fixed form for Fortran -fast Chooses generally optimal flags for the target platform -fastsse Chooses generally optimal flags for a processor that supports SSE instructions

> man gcc

Some miscellanous flags are described in the following:

-ffixed-line-length-132 To extend over the 77 column F77's limit -ffree-form / -ffixed-form Free/Fixed form for Fortran

GNU compilers

The gnu compilers are always available but they are not the best optimizing compilers. You do not need to load the module for using them.

The name of the GNU compilers are:

- g77: Fortran77 compiler

- gfortran: Fortran95 compiler

- gcc: C compiler

- g++: C++ compiler

The documentation can be obtained with the man command:

> man gfortan

Debuggers and Profilers

If at runtime your code dies, then there is a problem. In order to solve it, you can decide to analyze the core file (core not available with PGI compilers) or to run your code using the debugger.

Compiler flags

Whatever your decision, in any case you need to enable compiler runtime checks, by putting specific flags during the compilation phase. In the following we describe those flags for the different Fortran compilers: if you are using the C or C++ compiler, please check before because the flags may differ.

The following flags are generally available for all compilers and are mandatory for an easier debuggin session:

-O0 Lower level of optimisation -g Produce debugging information

Other flags are compiler specific and are described in the following:

INTEL Fortran compiler

The following flags are useful (in addition to "-O0 -g") for debugging your code:

-traceback generate extra information to provide source file traceback at run time -fp-stack-check generate extra code to ensure that the floating-point stack is in the expected state -check bounds enables checking for array subscript expressions -fpe0 allows some control over floating-point exception handling at run-time

PORTLAND Group (PGI) Compilers

The following flags are usefull (in addition to "-O0 -g") for debugging your code:

-C Add array bounds checking

-Ktrap=ovf,divz,inv Controls the behavior of the processor when exceptions occur:

FP overflow, divide by zero, invalid operands

GNU Fortran compilers

The following flags are usefull (in addition to "-O0 -g")for debugging your code:

-Wall Enables warnings pertaining to usage that should be avoided -fbounds-check Checks for array subscripts.

Debuggers available

Totalview

NOTE: if debugging with Intel compiler suite, you may want to add in your jobscript the following line, before the execution of TotalView:

source $INTELMPI_HOME/bin64/mpivars.sh debug

to provide a better population of the message queue.

Scalasca

....

In the following we report information about other ways to debug your codes:

IDB (serial debugger for INTEL compilers)

The Intel Debugger (idb) is a source-level, symbolic debugger that lets you:

- Control the execution of individual source lines in a program.

- Set stops (breakpoints) at specific source lines or under various conditions.

- Change the value of variables in your program.

- Refer to program locations by their symbolic names

- Print the values of variables and set tracepoints

- Perform other functions (examining core files, examining the call stack, displaying registers)

The idb debugger has two modes: dbx (default mode) or gdb (optional mode) For complete information about idb, see the online Intel Debugger (IDB) Manual in:

$INTEL_HOME/Documentation/en_US/idb

To use this debugger, you should specify the ifort command and the debugging command-line options described above, then you run your executable inside the "idb" environment:

> module load intel > ifort -O0 -g -traceback -fp-stack-check -check bounds -fpe0 -o myexec myprog.f90 > idb ./myexec

On MARCONI the debugger runs in GUI mode by default. You can also start the debugger in command line mode on these systems by specifying idbc instead of idb in the command line.

PGI: pgdbg (serial/parallel debugger)

pgdbg is the Portland Group Inc. symbolic source-level debugger for F77, F90, C, C++ and assembly language programs. It is capable of debugging applications that exhibit various levels of parallelism, including:

- Single-thread, serial applications

- Multi-threaded applications

- Distributed MPI applications

- Any combination of the above

There are two forms of the command used to invoke pgdbg. The first is used when debugging non-MPI applications, the second form, using mpirun, is used when debugging MPI applications:

> pgdbg [options] ./myexec [args] > mpirun [options] -dbg=pgdbg ./myexec [args]

More details in the on line documentation, using the "man pgdbg" command after loading the module.

To use this debugger, you should compile your code with one of the pgi compilers and the debugging command-line options described above, then you run your executable inside the "pgdbg" environment:

> module load pgi > pgf90 -O0 -g -C -Ktrap=ovf,divz,inv -o myexec myprog.f90 > pgdbg ./myexec

By default, pgdbg presents a graphical user interface (GUI). A command-line interface is also provided though the "-text" option.

GNU: gdb (serial debugger)

GDB is the GNU Project debugger and allows you to see what is going on 'inside' your program while it executes -- or what the program was doing at the moment it crashed.

GDB can do four main kinds of things (plus other things in support of these) to help you catch bugs in the act:

- Start your program, specifying anything that might affect its behavior.

- Make your program stop on specified conditions.

- Examine what has happened, when your program has stopped.

- Change things in your program, so you can experiment with correcting the effects of one bug and go on to learn about another.

More details in the on line documentation, using the "man gdb" command.

To use this debugger, you should compile your code with one of the gnu compilers and the debugging command-line options described above, then you run your executable inside the "gdb" environment:

> gfortran -O0 -g -Wall -fbounds-check -o myexec myprog.f90 > gdb ./myexec

VALGRIND

Valgrind is a framework for building dynamic analysis tools. There are Valgrind tools that can automatically detect many memory management and threading bugs, and profile your programs in detail. The Valgrind distribution currently includes six production-quality tools: a memory error detector, two thread error detectors, a cache and branch-prediction profiler, a call-graph generating cache profiler, and a heap profiler.

Valgrind is Open Source / Free Software, and is freely available under the GNU General Public License, version 2.

To analyse a serial application:

- Load Valgrind module --> module load valgrind

- Load module for the compiler and compile your code with the compiler you prefer (Use -O0 -g flags)

- Run the executable under Valgrind.

If you normally run your program like this:

myprog arg1 arg2

Use this command line:

valgrind (valgrind-options) myprog arg1 arg2

Memcheck is the default tool. You can add the --leak-check option that turns on the detailed memory leak detector. Your program will run much slower than normal, and use a lot more memory. Memcheck will issue messages about memory errors and leaks that it detects.

- Load Valgrind module --> module load valgrind

- Load modules for compiler and openmpi libraries (at present only available for intel and gnu)

- Compile your code with the "-O0 -g" flags both at compiling and linking time

- Run the executable under Valgrind (both in interactive than in bacth mode)

mpirun -np 4 valgrind (valgrind-options) myprog arg1 arg2

Core file analysis

In order to understand what problem was affecting you code, you can also try a "Core file" analysis. Since core files are usually quite large, be sure to work in the /scratch area.

There are several steps to follow:

- Increase the limit for possible core dumping

> ulimit -c unlimited (bash) > limit coredumpsize unlimited (csh/tcsh)

- If you are using Intel compilers, set to TRUE the decfort_dump_flag environment variable

> export decfort_dump_flag=TRUE (bash) > setenv decfort_dump_flag TRUE (csh/tcsh)

- Compile your code with the debug flags described above.

- Run your code and create the core file.

- Analyze the core file using different tools depending on the original compiler.

INTEL compilers

> module load intel > ifort -O0 -g -traceback -fp-stack-check -check bounds -fpe0 -o myexec prog.f90 > ulimit -c unlimited > export decfort_dump_flag=TRUE > ./myexec > ls -lrt -rwxr-xr-x 1 aer0 cineca-staff 9652 Apr 6 14:34 myexec -rw------- 1 aer0 cineca-staff 319488 Apr 6 14:35 core.25629 > idbc ./myexec core.25629

PGI compilers

> module load pgi > pgf90 -O0 -g -C -Ktrap=ovf,divz,inv -o myexec myprog.f90 > ulimit -c unlimited > ./myexec > ls -lrt -rwxr-xr-x 1 aer0 cineca-staff 9652 Apr 6 14:34 myexec -rw------- 1 aer0 cineca-staff 319488 Apr 6 14:35 core.25666 > pgdbg -text -core core.25666 ./myexec

GNU Compilers

> gfortran -O0 -g -Wall -fbounds-check -o myexec prog.f90 > ulimit -c unlimited > ./myexec > ls -lrt -rwxr-xr-x 1 aer0 cineca-staff 9652 Apr 6 14:34 myexec -rw------- 1 aer0 cineca-staff 319488 Apr 6 14:35 core.25555 > gdb ./myexec core.2555

Profilers (gprof)

In software engineering, profiling is the investigation of a program's behavior using information gathered as the program executes. The usual purpose of this analysis is to determine which sections of a program to optimize - to increase its overall speed, decrease its memory requirement or sometimes both.

A (code) profiler is a performance analysis tool that, most commonly, measures only the frequency and duration of function calls, but there are other specific types of profilers (e.g. memory profilers) in addition to more comprehensive profilers, capable of gathering extensive performance data.

gprof

The GNU profiler gprof is a useful tool for measuring the performance of a program. It records the number of calls to each function and the amount of time spent there, on a per-function basis. Functions which consume a large fraction of the run-time can be identified easily from the output of gprof. Efforts to speed up a program should concentrate first on those functions which dominate the total run-time.

gprof uses data collected by the -pg compiler flag to construct a text display of the functions within your application (call tree and CPU time spent in every subroutine). It also provides quick access to the profiled data, which let you identify the functions that are the most CPU-intensive. The text display also lets you manipulate the display in order to focus on the application's critical areas.

Usage:

> gfortran -pg -O3 -o myexec myprog.f90 > ./myexec > ls -ltr ....... -rw-r--r-- 1 aer0 cineca-staff 506 Apr 6 15:33 gmon.out > gprof myexec gmon.out

It is also possible to profile at code line-level (see "man gprof" for other options). In this case you must use also the “-g” flag at compilation time:

> gfortran -pg -g -O3 -o myexec myprog.f90 > ./myexec > ls -ltr ....... -rw-r--r-- 1 aer0 cineca-staff 506 Apr 6 15:33 gmon.out > gprof -annotated-source myexec gmon.out

It is possilbe to profile MPI programs. In this case the environment variable GMON_OUT_PREFIX must be defined in order to allow to each task to write a different statistical file. Setting

export GMON_OUT_PREFIX=<name>

once the run is finished each task will create a file with its process ID (PID) extension

<name>.$PID

If the environmental variable is not set every task will write the same gmon.out file.

Scientific libraries

MKL

The Intel Math Kernel Library (Intel MKL) enables improving performance of scientific, engineering, and financial software that solves large computational problems. Intel MKL provides a set of linear algebra routines, fast Fourier transforms, as well as vectorized math and random number generation functions, all optimized for the latest Intel processors, including processors with multiple cores.

Intel MKL is thread-safe and extensively threaded using the OpenMP technology

documentation can be found in:

${MKLROOT}/../Documentation/en_US/mkl

To use the MKL in your code you to load the module, then to define includes and libraries at compile and linking time:

> module load mkl > icc -I$MKL_INC -L$MKL_LIB -lmkl_intel_lp64 -lmkl_core -lmkl_sequential

For more inormation please refer to the documentation.

Parallel programming

The parallel programming on MARCONI is based on IntelMPI and OpenMPI versions of MPI. The libraries and special wrappers to compile and link the personal programs are contained in several modules, one for each supported suite of compilers.

The main four parallel-MPI commands for compilation are:

- mpiifort (IntelMPI)/ mpif90 (OpenMPI) (Fortran90)

- mpiifort (IntelMPI) / mpif77 (OpenMPI) (Fortran77)

- mpiicc (IntelMPI) / mpicc (OpenMPI) (C)

- mpiicpc (IntelMPI) / mpicxx (C++)

These command names refers to wrappers around the actual compilers, they behave differently depending on the module you have loaded.

> module avail intelmpi intelmpi/2017--binary(default) intelmpi/5.1--binary > module load intel/pe-xe-2017--binary intelmpi/2017--binary > man mpiifort > mpiifort -o myexec myprof.f90 (uses the ifort compiler)

Please note that the Intel mpif90 wrapper is meant for using the GNU gfortran compiler instead of Intel ifort. If you need to use the IntelMPI libraries with the GNU gfortran compiler you need to redefine the I_MPI_F90 variable:

> module load autoload intelmpi/2017–binary

> export I_MPI_F90=gfortran

> mpif90 -o myexec myprof.f90 (uses the gfortran compiler)

With the above setting the system gfortran (4.8) compiler will be used. You can use a more recent version loading the gnu/6.1.0 module together with the intelmpi/2017–binary.

> module avail openmpi openmpi/1-10.3--gnu--6.1.0

> module load gnu openmpi/1-10.3--gnu--6.1.0 > man mpif90 > mpif90 -o myexec myprof.f90 (uses the gfortran compiler)

The parallel applications have to be executed with the command:

> mpirun ./myexec

There are limitations on running parallel programs in the login shell. You should use the "Interactive PBS" mode, as described in the "Interactive" section, previously in this page.

-Wall Enables warnings pertaining to usage that should be avoided -fbounds-check Checks for array subscripts.