...

System A2 (Knights Landing) - out of production since January, 2020 Model: Lenovo Adam Pass Racks: 50 Peak Performance: 11 PFlop/s (see details in UG3.1.2) |

|---|

System A3 (Skylake) Model: Lenovo Stark Racks: xx |

|---|

...

Network type: Intel Omnipath, 100 Gb/s. At the time of setup, MARCONI was the largest Omnipath cluster in the world.

Network topology: Fat-tree 2:1 oversubscription tapering at the level of the core switches only.

Core Switches: 5 x OPA Core Switch "Sawtooth Forest", 768 ports each.

Edge Switch: 216 OPA Edge Switch "Eldorado Forest", 48 ports each.

Maximum system configuration: 5(opa) x 768 (ports) x 2 (tapering) → 7680 servers.

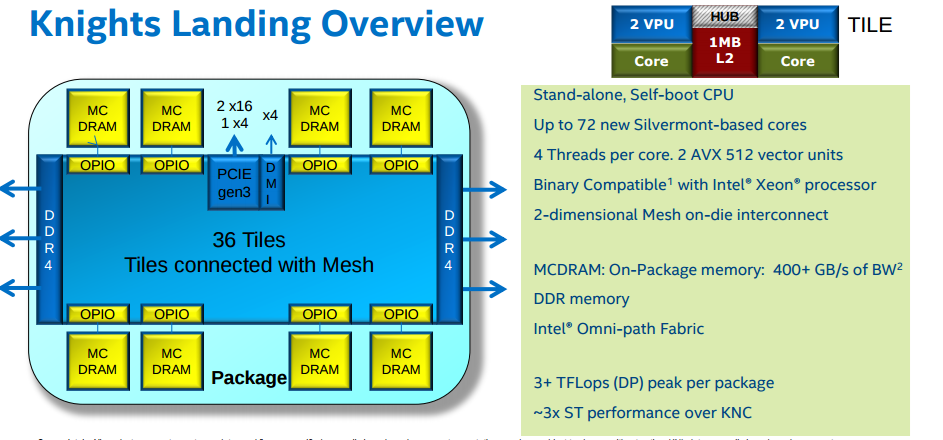

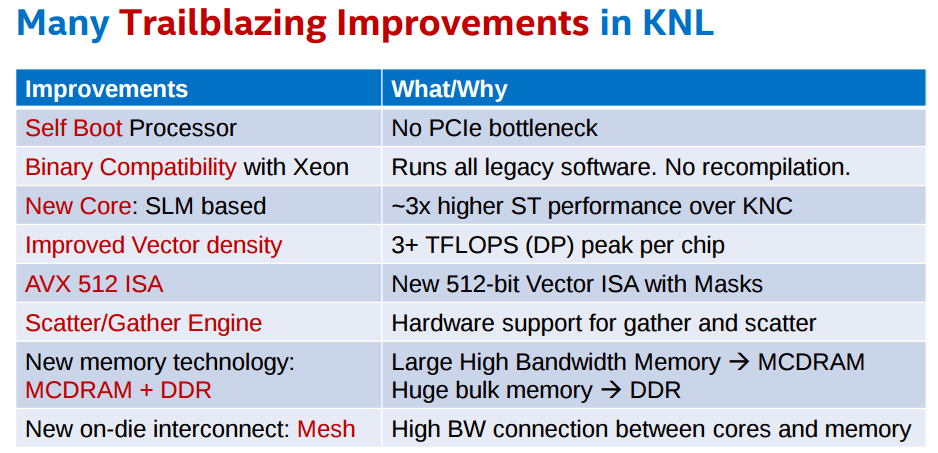

KNL is the evolution of Knights Corner (KNC), available on GALILEO until January 2018. The main differences between KNC and KNL are:

...

This supercomputer is available to European researchers as a Tier-0 system of the PRACE (www.prace-project.eu) infrastructure, as well as to Italian public and industrial researchers.

Part of this system (MARCONI_Fusion) is reserved for the activity of EUROfusion (https://www.euro-fusion.org/). Details on the MARCONI_Fusion environment are reported in a dedicated document.

Access

All the login nodes have an

...

Applications compiled for KNL are binary compatible with regular computing nodes.

KNL supports Intel AVX-512 instruction set extensions. The same three login nodes serve the Marconi-Broadwell (Marconi-A1) and the Marconi-KNL (Marconi-A2) partitions and queueing systems.

Storage devices are in common between the two partitions.

This supercomputer is available to European researchers as a Tier-0 system of the PRACE (www.prace-project.eu) infrastructure, as well as to Italian public and industrial researchers.

Part of this system (MARCONI_Fusion) is reserved for the activity of EUROfusion (https://www.euro-fusion.org/). Details on the MARCONI_Fusion environment are reported in a dedicated document.

Access

All the login nodes have an identical environment and can be reached with SSH (Secure Shell) protocol using the "collective" hostname:

...

#SBATCH -p bdw_all_serial

Submitting Batch jobs for A2 partition

On MARCONI it is possible to submit jobs requiring different resources by specifying the corresponding partition. If you do not specify the partition, your jobs will run on the bdw_all_serial partition.

If you do not specify the walltime (by means of the #SBATCH --time directive), a default value of 30 minutes will be assumed.

[username@r000u07l02 ~]$

With respect to the previous configuration. submission process results simplified. You do not more need to load the specific "env-knl" module to submit jobs on partitions based on Knights Landing processors. Instead, you simply need to specify the correct partition using the "SBATCH -p directive. Choosing a knl_***_*** partition, you will be sure to work on KNL nodes.

Each KNL node exposes itself to SLURM as having 68 cores (corresponding to the physical cores of the KNL processor). Jobs should request the entire node (hence, #SBATCH -n 68), and the KNL SLURM server is configured so that to assign the KNL nodes in an exclusive way (even if less ncpus are asked). Hyper-threading is enabled, hence you can run up to 272 processes/threads on each assigned node.

The configuration of the Marconi-A2 partition allowed to require different HBM modes (on-package high-bandwidth memory based on the multi-channel dynamic random access memory (MCDRAM) technology) and clustering modes of cache operations:

#SBATCH --constraint=flat/cachePlease refer to the official Intel documentation for a description of the different modes.

For the queues serving the Marconi FUSION partition, please refer to the dedicated document.

The maximum memory which can be requested is 86000MB for cache/flat nodes:

#SBATCH --mem=86000(the default measurement unity, if not specified, is MB)

For flat nodes the jobs can require the KNL high bandwidth memory (HBM) using Slurm's Generic RESource (GRES) options:

#SBATCH --constraint=flat#SBATCH --mem=86000For example, to request a single KNL node in a production queue the following SLURM job script can be used:

#!/bin/bash

#SBATCH -N 1

#SBATCH -A <account_name>

#SBATCH --mem=86000

#SBATCH -p knl_usr_prod

#SBATCH --time 00:05:00

#SBATCH --job-name=KNL_batch_job

#SBATCH --mail-type=ALL

#SBATCH --mail-user=<user_email>

srun ./myexecutable ...

Submitting Batch jobs for A3 partition

...

MARCONI Partition | SLURM partition | QOS | # cores per job | max walltime | max running jobs per user/ max n. of cpus/nodes per user | max memory per node (MB) | priority | HBM/clustering mode | notes |

front-end | bdw_all_serial (default partition) | noQOS | max = 6 (max mem= 18000 MB) | 04:00:00 | 6 cpus | 18000 | 40 | ||

| A1 | qos_rcm | min = 1 max = 48 | 03:00:00 | 1/48 | 182000 | - | runs on 24 nodes shared with the debug queue on SKL | ||

| A2 | knl_usr_dbg | no QOS | min = 1 node max = 2 nodes | 00:30:00 | 5/5 | 86000 (cache) | 40 | runs on 144 dedicated nodes | |

| A2 | knl_usr_prod | no QOS | min = 1 node max = 195 nodes | 24:00:00 | 1000 nodes | 86000 (cache) | 40 | ||

| knl_qos_bprod | min = 196 nodes max = 1024 nodes | 24:00:00 | 1/1000 | 86000 (cache) | 85 | #SBATCH -p knl_usr_prod #SBATCH --qos=knl_qos_bprod | |||

| qos_special | >1024 nodes | >24:00:00 (max = 195 nodes for user) | 86000 (cache) | 40 | #SBATCH --qos=qos_special request to superc@cineca.it | ||||

| A3 | skl_usr_dbg | no QOS | min = 1 node max = 4 nodes | 00:30:00 | 4/4 | 182000 | 40 | runs on 24 dedicated nodes | |

| A3 | skl_usr_prod | no QOS | min = 1 node max = 64 nodes | 24:00:00 | 64 nodes | 182000 | 40 | ||

| skl_qos_bprod | min=65 max = 256 | 24:00:00 | 1/256 1 jobs per account | 182000 | 85 | #SBATCH -p skl_usr_prod #SBATCH --qos=skl_qos_bprod | |||

| qos_special | >256 nodes | >24:00:00 (max = 64 nodes for user) | 182000 | 40 | #SBATCH --qos=qos_special request to superc@cineca.it | ||||

| qos_lowprio | max = 64 nodes | 24:00:00 | 64 nodes | 182000 | 0 | #SBATCH --qos=qos_lowprio |

...